How to prompt GPT-5.4 in finance

OpenAI just released GPT-5.4, their most capable model yet. I wrote a prompting guide to help CFOs get the best out of it.

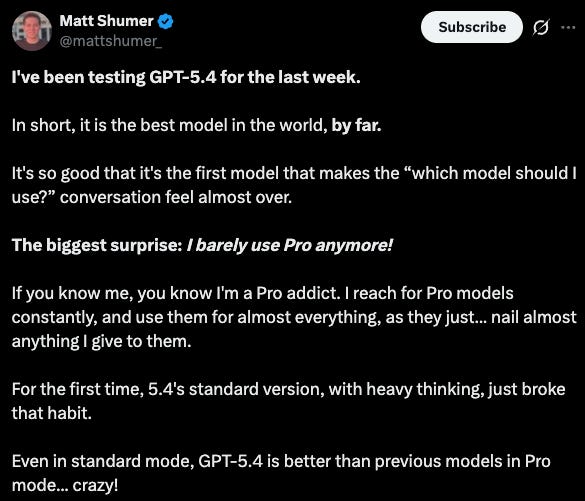

GPT-5.4 just dropped and it’s a different animal.

It reasons better. It codes better. It can look at a browser screenshot, click through interfaces, send emails, and schedule meetings on its own.

But the part that matters for finance is this: it stays on track when the work gets complex. It doesn’t lose its place halfway through a multi-step task. And it actually finishes what you ask it to do.

That’s a big deal because finance work is never one step.

You pull data from one system, cross-reference it against another, apply rules, check for exceptions, and produce a summary that someone else needs to trust.

Earlier models would get lost somewhere in the middle. GPT-5.4 was built to handle that entire chain.

But here’s the thing.

The model only works as well as the instructions you give it.

OpenAI published a prompting guide alongside the release. It’s aimed at developers and engineers. Dense, technical, and full of XML blocks and API references.

Most CFOs will never read it.

So I did. And I pulled out the patterns that matter for finance teams.

Let’s dive in.

Tell It Exactly What You Want Back

This is the single biggest unlock.

Most people prompt like this:

Analyze this vendor spend dataThat’s like handing a new analyst a spreadsheet and saying “figure it out.”

You’ll get something back. It probably won’t be what you needed.

OpenAI calls the fix an Output contract.

In finance terms, think of it as a reporting template.

Review the attached vendor spend file and return the following in this order:

Total spend by vendor, sorted highest to lowest

Any vendors with spend exceeding their contract value

Contracts expiring in the next 90 days with auto-renewal terms

A two-paragraph executive summary I can forward to the CFOWhen you define the structure upfront, the model stops guessing. It follows the template. You get something usable on the first try instead of going back and forth three times.

If you need a table, say table.

If you need markdown, say markdown.

GPT-5.4 is better at sticking to formats than any model before it, but it still needs you to ask.

Make It Verify Its Own Work

his is the one most finance teams skip. And it’s the one that matters most.

GPT-5.4 can verify its own output before handing it to you. OpenAI calls it a verification loop. I call it the self-review before it goes to the board.

Add this to any prompt where accuracy matters:

Before giving me the final output:

Check that every number ties back to the source data

Confirm that totals match the sum of their line items

Flag any assumptions you made

If anything doesn’t reconcile, tell me what’s off and whyIt won’t catch everything.

But it catches a surprising amount of the mistakes that would otherwise land in your report. And in finance, the cost of a wrong number is never zero.

Don’t Let It Quit Early

You ask it to review 14 contracts. It reviews 9 and says “here’s the summary.”

You ask for a full reconciliation. It matches the obvious ones and stops.

This is the most common problem with AI in finance. Incomplete execution. GPT-5.4 can be told to keep going until the job is actually done.

Do not stop until every item is covered. If you can’t resolve a line item, mark it as [unresolved] and explain what’s missing. Track your progress and confirm you’ve addressed every row in the dataset before giving me the final output.For batch work like reviewing a vendor list, reconciling a quarter of invoices, or checking every contract for renewal traps, that one instruction changes everything.

Tell It What To Do When It Gets Stuck

AI models hit walls. A lookup returns nothing. A name doesn’t match. A document is missing a field. The default behavior is to skip it or give you something vague.

Finance data is never clean. Vendor names are spelled differently across systems. Contract terms are buried in PDFs. Cost centers change between quarters.

A model that gives up at the first mismatch is useless.

So tell it not to:

If a search returns no results or incomplete data, try at least two different approaches before telling me nothing was found. For example, try alternate spellings, broader search terms, or a different source. Only report “not found” after you’ve exhausted your options, and tell me what you tried.In finance, this matters because the data is rarely clean.

Match the Thinking to the Task

GPT-5.4 lets you control how hard it thinks.

OpenAI calls it reasoning effort. It ranges from none to extreme.

Most people crank everything to high. That’s like asking a senior partner to do data entry.

None or Low for the simple stuff. Reformatting data, extracting fields from a document, generating a template. The model doesn’t need to think here and making it do so wastes time and money.

Medium is the sweet spot for most finance work. Analyzing spend trends, summarizing contract terms, comparing actuals to budget, reviewing a board deck for inconsistencies.

High for the work that requires real judgment. Multi-document synthesis, identifying hidden risks across a portfolio of contracts, reconciling conflicting data, building a narrative around financial performance.

Match the effort to the task. You’ll save time and get better results.

Use It for Research

GPT-5.4 is significantly better at research than earlier models. OpenAI built a three-pass pattern that works well for finance:

I need you to research this in three passes:

Plan: List the key questions we need to answer

Retrieve: Search for data on each question and follow any leads that come up

Synthesize: Bring it all together, resolve any contradictions, and give me a final analysis with sources

Only stop when more searching is unlikely to change the conclusion.This works for competitor analysis, market research for board presentations, regulatory impact, or understanding how a new accounting standard affects your reporting.

The 3-pass structure forces thoroughness instead of giving you the first thing it finds.

Ground Every Claim in Evidence

Finance lives and dies on credibility. If the model produces a number, you need to know where it came from.

Only make claims based on the data I provided or what you found through search. If sources conflict, tell me both sides and where they disagree. Never fabricate a citation, a URL, or a statistic. If you can’t support a statement, say so.This matters for anything that ends up in a board deck, an audit file, or a regulatory filing. The model is good at sounding confident. Your job is making sure that confidence is earned.

Chain It All Together

The real power isn’t single prompts. It’s chaining steps into a workflow the model runs end-to-end. Here’s a vendor risk workflow:

I need you to complete the following workflow. Do each step in order and don’t skip any:

Step 1: Review the attached vendor spend file and identify all vendors with spend over $50,000 and no contract on file

Step 2: For each of those vendors, check the contract register to see if a contract exists under a different name

Step 3: For any vendor still unmatched, flag it as maverick spend

Step 4: Review all active contracts and identify any expiring in the next 90 days

Step 5: Check whether the notice period for each expiring contract has already passed

Step 6: Write a risk brief summarizing maverick spend, concentration risk, and contract renewal traps

Step 7: Before delivering the brief, verify that every number ties back to the source files

If you get stuck on any step, tell me what’s blocking you instead of skipping it.That’s seven steps. Earlier models would get lost by step 4 or 5.

GPT-5.4 is designed to track its place, maintain context across steps, and actually finish. The key is writing the workflow out explicitly so the model knows what done looks like.

Keep It Honest About What It Doesn’t Know

The most dangerous thing AI can do in finance is sound certain when it shouldn’t be.

If you’re not sure about something, say so. If you’re making an inference rather than stating a fact from the data, label it clearly. If required information is missing, tell me what’s missing instead of filling in the gap with assumptions.False confidence is more expensive than uncertainty. Every time.

The Bottom Line

GPT-5.4 is the most capable model OpenAI has released for the kind of work finance teams actually do.

Multi-step analysis.

Long documents.

Data reconciliation.

Structured reporting.

But capability without direction is just wasted potential.

The prompts above aren’t complicated. They’re just specific. And in finance, specificity is the difference between an AI that creates work and one that eliminates it.

Pick one workflow. Define the steps. Tell it what done looks like. Check the output. Then run it again next month and watch it get faster.

And that’s all for today.

See you tomorrow!

Whenever you’re ready, there are 2 ways I can help you:

If you’re building an AI-powered CFO tech startup, I’d love to hear more and explore if it’s a fit for our investment portfolio.

I’m Wouter Born. A CFOTech investor, advisor, and founder of finstory.ai

Find me on LinkedIn

Well done. Thanks for the write up.

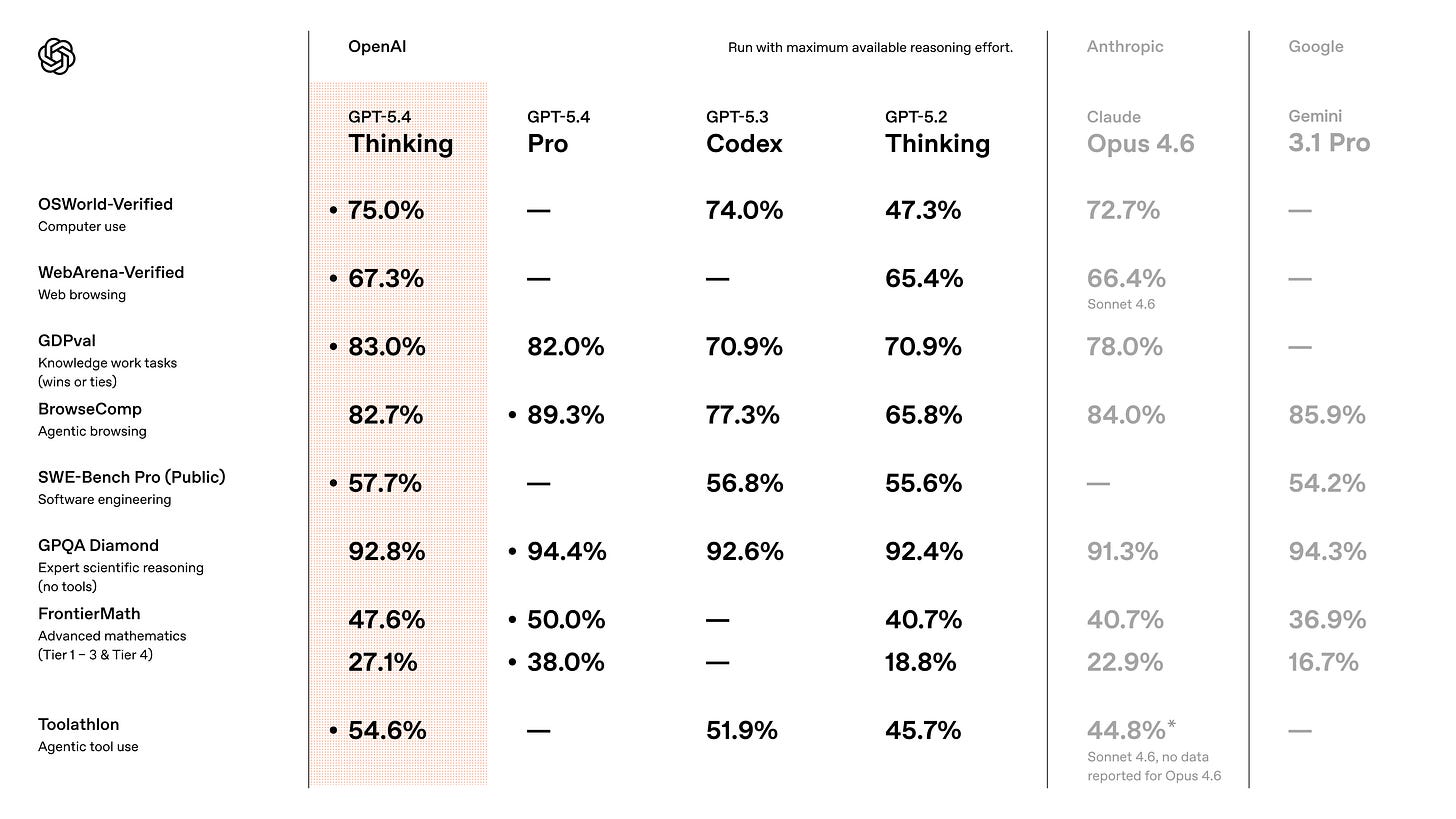

Thanks for the write up -Still early days I know, but would you have a view on GPT 5.4 vs the latest Anthropic models?