7 uncomfortable AI in finance insights every CFO must hear at least once

The most dangerous lies about hallucination, data security, hiring, and the future of finance work. The 7 things from our live insider session that put every CFO ahead of 99% of finance leaders.

Saturday’s edition was the tactical version.

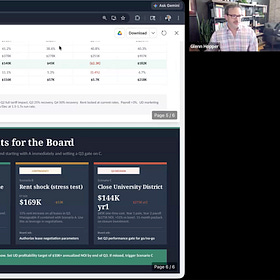

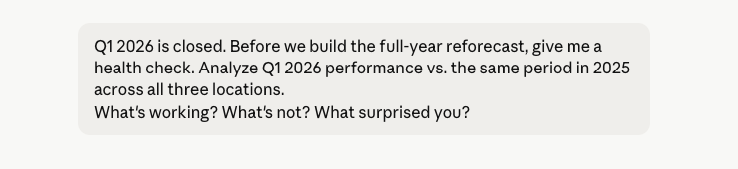

Glenn Hopper’s exact prompts, the dataset, and the full reforecast workflow inside a Claude Project. If you missed it, it’s here.

How to run a monthly reforecast with Claude as a CFO

Yesterday we hosted Glenn Hopper for a live insider session. Glenn has spent 20+ years in finance leadership and now runs robocfo.ai, where he builds AI implementations for the office of the CFO.

Today is the strategic version.

After the Live Claude in finance demo, we did the Q&A.

The Q&A was where most of the room leaned forward. The questions came from sitting CFOs, controllers, and FP&A leaders who weren’t asking how to build a reforecast. They were asking how to think about AI in their function.

Glenn answered every one of them directly. No vendor-speak. No hedging. Some of his answers will be uncomfortable for your CEO, your IT team, and possibly for you.

Here are the 7 strategic insights from the session that I think every CFO must hear at least once. The most dangerous lies about hallucination, data security, hiring, and the future of finance work.

Let’s dive in.

1. The data privacy concern is mostly a scapegoat

A CFO in the room asked Glenn how comfortable he really feels putting financial data into Claude. It’s the question every CFO is wrestling with right now.

Glenn’s answer was direct:

I firmly believe the data privacy issue has become a scapegoat for being scared of AI. You’re already running your finance stack on AWS, Google Cloud, OneDrive, SharePoint. Those have the same compliance standards as the paid AI tools. If you’re on the Team or Enterprise plan, you have SOC 2 Type 2 certification. That’s the same level as your corporate Office 365 or corporate Gmail.

Then he pushed harder.

The risk isn’t using AI. The risk is locking it down so hard that your employees go around you and use the free version with company data. That’s where the real security problem lives. It’s called Shadow AI, and every company has it.

This is the reframe most CFOs need.

The conversation in your IT meeting is probably you asking permission to use Claude. But you should already use it and find out if you’re using the correct version that protects your data.

If your company has a strict no-AI policy and your employees are productive, they’re not following the policy. They’re using their personal accounts. And every financial document they paste into a free version of any AI tool is now training data on a server you don’t control.

The question isn’t whether to allow AI.

It’s whether to govern it.

2. Hallucination is a training problem, not a technology problem

Someone asked Glenn how he handles Claude’s tendency to make things up. His answer reframed the whole problem.

The way these models are trained, they read the entire internet. Then humans rate the responses with thumbs up and thumbs down. An inevitable thing happened: when the model said ‘I don’t know,’ humans gave it a thumbs down.

So we’ve inadvertently trained the models to lie. They know their human overlords don’t like ‘I don’t know’ as an answer, so they make something up instead.

This is the part most AI training courses get wrong. Hallucination isn’t a bug. It’s the predictable output of how the models were taught to please the humans rating them.

Glenn’s fix is the one most CFOs underuse:

The way you reduce hallucination is to give it more context. That’s why projects are great. Several years of financial data, the full GL, all the context an employee would have. The more context you give it, the less it makes up.

The lesson?

If Claude is hallucinating in your finance work, the problem isn’t Claude. The problem is that you’re asking it to answer questions without the context an experienced analyst would have.

Load the project with everything.

Multiple years of statements. Vendor contracts. Board minutes. The chart of accounts with notes. The more an AI knows about your business, the less it makes up.

3. The new hire is no longer one person

The most uncomfortable question of the session came from a CFO running a 60-person finance team. He asked Glenn what happens to his junior analyst hires when Claude can do the work in 15 minutes.

Glenn paused before answering. Then:

We’ve all been doing this a while. We have domain expertise. We got there by doing the work that’s now being automated. If I’m fresh out of college with great Excel skills and an internship, how do I compete with AI that does this in a fraction of the time? I don’t have a clean answer.

Then he gave the answer that landed hardest in the room.

What I will say is that when I’m hiring now, I’m not just hiring you. I’m hiring you and your army of agents. The expectation is that you 10x what you’d do alone with the AI tools you bring with you.

Jensen Huang at NVIDIA says:

If you’re paying a developer $500,000 a year and they’re not spending $250,000 on AI tokens, you should question whether that’s the right hire.

We’re going there in finance too.

The new baseline isn’t ‘can you build a model.’ It’s ‘can you direct 10 agents to build 10 models in the time it used to take to build one?

This is the hiring conversation every CFO will be having within 12 months. The candidates who win the next decade aren’t the ones with the cleanest Excel models.

They’re the ones who arrive on day one with their own AI workflows already running.

If you’re hiring this quarter, ask the question:

Walk me through your AI workflow. What do you automate? What do you delegate to agents?

The candidate who can’t answer is the candidate who’s still doing the 2024 version of the job.

4. Prompt engineering for the chat interface is mostly overrated

This one will surprise people. Glenn doesn’t believe in prompt engineering the way most courses teach it.

I don’t say ‘analyze gross margin variance.’ I say ‘what’s working, what’s not, what surprised you.’ These models are good enough now that prompt engineering for the chat interface is mostly overrated.

Saying I’m good at prompt engineering is like saying I’m good at Googling.

Where prompt engineering DOES matter:

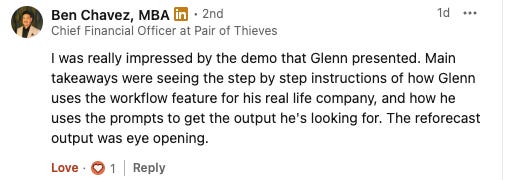

Project instructions. The custom instructions you set once at the project level. This is where you build the safety rails (”flag anything over 5%”), the context (”our fiscal year is calendar year”), and the format preferences (”present at the location level first”).

Skills you reuse over and over. A skill is a markdown file Claude references on every conversation. If you’re doing month-end close every month, the skill is where the prompt engineering lives — not in the 30 different ways you might phrase the request each month.

Scheduled tasks. The workflows that run automatically. These need precise, deterministic prompts because no human is in the loop to course-correct.

For everything else … the daily back-and-forth in the chat window.

Talk to Claude like you’d talk to your analyst. Conversational. Lazy. Specific to the business question, not the analytical method.

This is the opposite of what most AI training courses teach.

They sell you templates and frameworks for the chat interface. Glenn says the templates belong in the project setup, not the chat window.

5. The million-token context window changes the game

Glenn explained Claude’s context window in a way that finally made it click for the CFOs in the room.

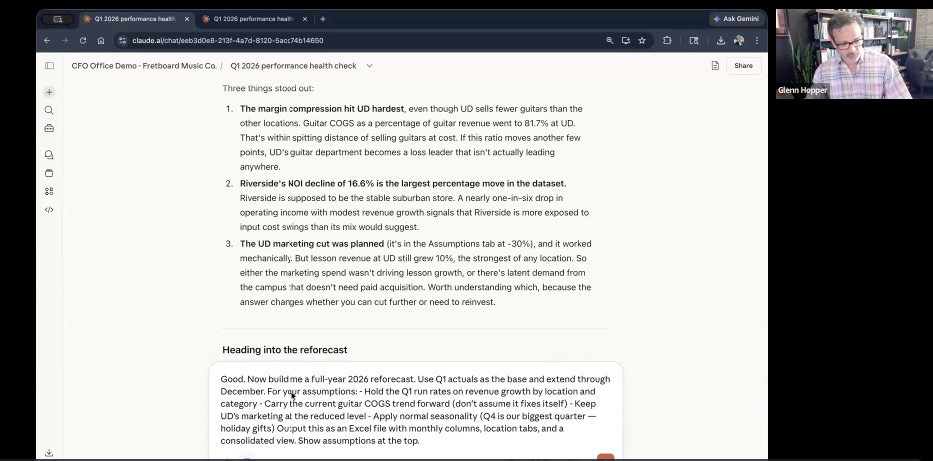

A typical book is 100,000 words. A million tokens is about 750,000 words. That’s 7.5 books worth of context in a single conversation.

Then the implication:

In this project, Claude has all our financials, all the previous chat history, the custom instructions, and any skills, all in context at once. That’s why a single conversational prompt can do work that used to require breaking it into 10 separate steps.

A year ago, you had to chain prompts. Strip out formatting.

Convert spreadsheets to flat CSVs. Break complex questions into bite-sized chunks. Now you can hand Claude an entire project … multiple years of financials, the GL, vendor contracts, and board minutes. And ask one question that requires synthesizing all of it.

Most of the prompt chaining tutorials you’ve watched in the last 18 months are obsolete. The technique they teach (break the task into 10 steps) is no longer necessary for most finance work.

One well-framed question against a properly loaded project does the same work in a fraction of the time.

If you’ve been treating Claude like a calculator that needs careful instructions, try treating it like an analyst with a photographic memory who’s read every document you’ve ever shared with it.

The output will be different.

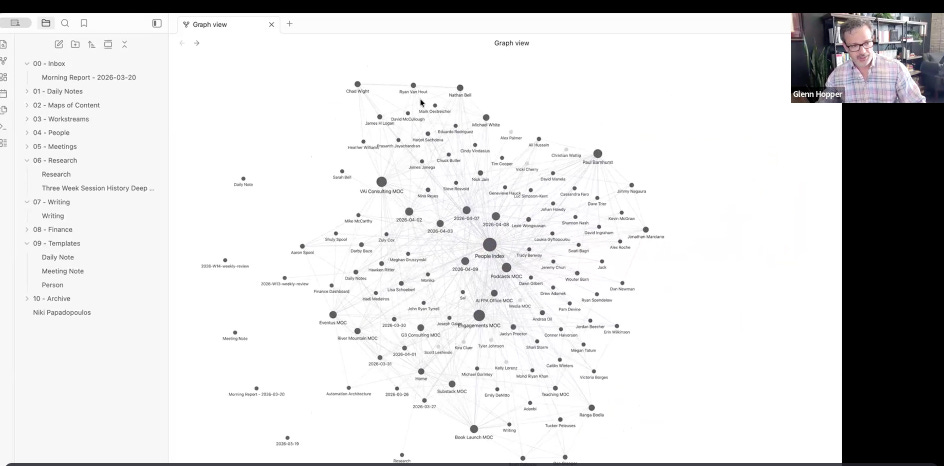

6. The real value of meeting transcripts isn’t the notes

Near the end of the session, someone asked Glenn how he uses meeting transcript tools like Fireflies. His answer surprised the room.

Most people use transcript tools for the obvious reason: action items and meeting notes. Glenn uses them for something more strategic.

The big one is pulling tasks out and dumping them into my Notion so I’m not swivel-chairing data from one system to another. But also, with those transcripts, I do sentiment analysis. I have it read the transcript and ask: what is this person on the call concerned about? What does this person need addressed?

Then the part that stopped the room:

Before every call, I take the transcript from the last call, the action items, and have AI search out the person’s LinkedIn profile, get their background, and build me a cheat sheet. A run of show every time I get on a call. I know who I’m talking to, what their background is, what we talked about in the last call, what their concerns are.

That’s the cheat sheet you used to need an assistant for.

For a CFO doing investor calls, board meetings, vendor negotiations, customer escalations, and 1:1s … a 30-second AI-generated brief before every conversation is a 10x advantage.

You walk into every meeting already knowing what the other person cared about last time, what they said about your competitor, and what they’re worried about right now.

Nobody else in your industry is doing this yet.

The tools have existed for 18 months. The workflow takes 20 minutes to build.

The advantage compounds every quarter.

7. Stop locking AI down. Start governing it

This is the thread that ran through the entire session, but Glenn made it explicit toward the end.

I still deal with companies who have a very strict policy, or they say you can only use Copilot. There’s a reason I’m not doing a Copilot demo today. It’s garbage. It’s hot garbage. So if you make the decision to limit your employees, they’re figuring out the power of these tools.

The companies that really have the highest security risk are the ones that are locking it down completely. Employees are going to find the most efficient way to do the job, and the company can either benefit from it or not. If you don’t have the right policies in place and you’re trying to lock it down too much, that’s when you’re going to have employees doing really stupid stuff with their data.

The takeaway for CFOs:

The choice isn’t between AI and not AI.

The choice is between using it with your rules or pretending that AI isn’t already part of your finance function. If your company is in the second camp, your job this month is to move it to the first. Not by writing a 40-page policy. By doing three things:

Audit what’s already happening. Ask your team. Privately, no judgment. What AI tools they’re already using and what they’re using them for. The list will surprise you.

Pick one approved tool with the right plan. Claude Team, Claude Enterprise, or the equivalent on another platform. SOC 2 Type 2 certification. Zero-retention agreement. This becomes the only sanctioned tool.

Write a one-page policy. What data goes in. What data doesn't? Who owns the workflow? Who reviews the output? One page. Not 40.

This is the work that separates the CFOs who lead the next decade from the ones who explain why they didn’t.

The thread that ties all 7 together

If I had to summarize the entire 90 minutes of Glenn’s session in one sentence, it would be this:

The question isn’t whether AI will change finance. It’s whether you’re shaping how it changes your finance function or watching it happen to you.

Glenn’s session was the most concrete expression of that I’ve seen. He showed exactly how a real CFO uses Claude in real planning work, with real safety rails, real context, and a real point of view on where it works and where it doesn’t.

The demo is in Saturday’s edition.

The strategy is in this one. Together they’re the playbook.

The next insider session

Glenn’s session was the first in the Insider series. The next one is being scheduled for May. If you want to be in the room live, with the ability to ask questions and challenge what you see, you can upgrade to Insider.

Paid members get the strategic content recap.

Insider members get the live experience.

And that’s all for today.

If this resonated, forward it to one CFO who’s still locking AI down instead of governing it.

See you on Thursday.

Whenever you’re ready, there are 2 ways I can help you:

If you’re building an AI-powered CFO tech startup, I’d love to hear more and explore if it’s a fit for our investment portfolio.

I’m Wouter Born. A CFOTech investor, advisor, and founder of finstory.ai

Find me on LinkedIn